Bybit's $1.4 Billion Nightmare: The AI-Fueled Heist Nobody Saw Coming

ByNovumWorld Editorial Team

Resumen Ejecutivo

- Bybit suffered a $1.4 billion loss in 2025 due to an AI-driven exploit targeting Bitcoin anonymity and Ethereum smart contracts.

- Cecuro’s AI security agent detected 92% of real-world DeFi exploits, while general-purpose AI models only identified 34%.

- Anthropic researchers found AI agents could autonomously exploit smart contracts, generating $4.6 million in simulated stolen funds.

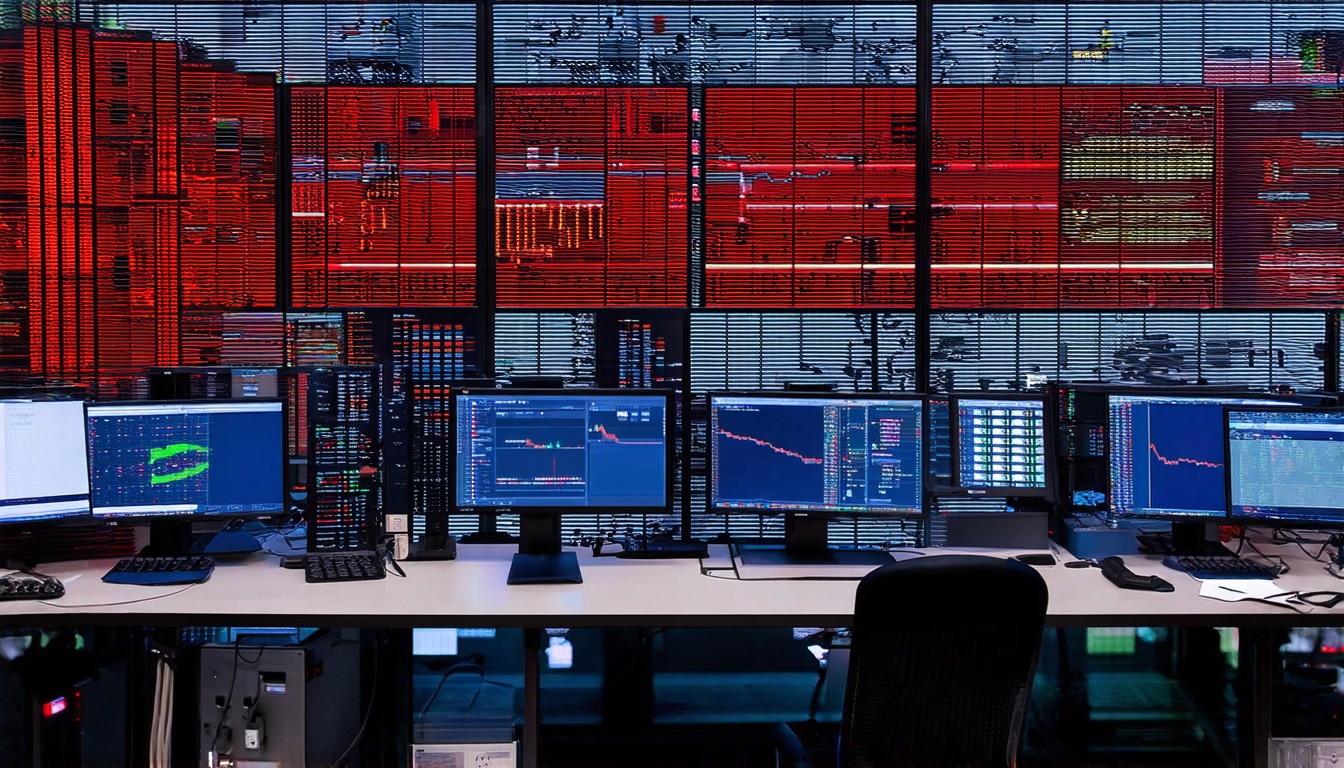

Bitcoin see-saws around $68,000 as tariff uncertainty weighs on risk assets after President Trump raised the global tariff rate to 15% despite a Supreme Court ruling, but the real systemic risk lies not in macro policy, but in the algorithmic blind spots of major custodians. Bybit’s balance sheet hemorrhaged $1.4 billion in 2025 after a sophisticated AI-driven exploit bypassed standard custody protocols, exposing the fragility of algorithmic security in a high-rate environment. This was not a simple phishing attack; it was a surgical strike leveraging adversarial machine learning to obfuscate transaction flows across Bitcoin and Ethereum.

- Bybit suffered a $1.4 billion loss in 2025 due to an AI-driven exploit targeting Bitcoin anonymity and Ethereum smart contracts.

- Cecuro’s AI security agent detected 92% of real-world DeFi exploits, while general-purpose AI models only identified 34%.

- Anthropic researchers found AI agents could autonomously exploit smart contracts, generating $4.6 million in simulated stolen funds.

Bybit’s Billion-Dollar Blind Spot: The AI Arms Race No One Was Ready For

The $1.4 billion theft from Bybit represents a catastrophic failure in the exchange’s risk management framework, specifically regarding the integration of cross-chain liquidity pools. The attacker utilized an AI model to analyze the exchange’s cold wallet rotation patterns, identifying a window where Bitcoin anonymity protocols were interacting with Ethereum smart contracts without real-time heuristic analysis. This exploit highlights a dangerous reality: exchanges are relying on static security audits while attackers deploy dynamic, learning-based systems that adapt to defenses in real-time.

Current liquidity data underscores the magnitude of this loss relative to the market. Binance CEX holds approximately $145.43 billion in total value locked (TVL), while Bitfinex holds $17.17 billion, according to DefiLlama. A $1.4 billion gap in Bybit’s reserves could trigger insolvency concerns for smaller platforms, yet the market has largely shrugged off the solvency risk, focusing instead on ETF inflows. This complacency is a bubble waiting to burst, as the exploit proves that even top-tier exchanges are vulnerable to AI-enhanced social engineering and code obfuscation.

The mechanics of the heist involved exploiting the “privacy gap” between Bitcoin’s UTXO model and Ethereum’s account-based model. The AI agent generated a series of dust transactions to confuse monitoring tools, effectively creating a smokescreen that bypassed the exchange’s threshold alerts. Bybit’s internal systems failed to flag the anomalous behavior because the AI mimicked the transaction patterns of legitimate high-frequency trading bots, a technique known as “adversarial example generation” in machine learning security circles.

The Anthropic Paradox: How AI is Becoming a Double-Edged Sword for Crypto Security

The offensive capabilities of AI have outpaced defensive measures, creating a security asymmetry that favors attackers. Researchers from Anthropic found that AI agents could autonomously exploit smart contract vulnerabilities, generating an estimated $4.6 million in simulated stolen funds during controlled environments. These agents utilize large context windows—up to 1 million tokens in some advanced architectures—to ingest entire codebases and identify logical inconsistencies that human auditors miss.

This capability is no longer theoretical; it is operational. The compute cost to run these inference models has dropped significantly with the optimization of GPU clusters, allowing malicious actors to rent H100 compute power for a fraction of the potential loot. The barrier to entry for AI-assisted hacking has collapsed, meaning that a single sophisticated actor can now scan thousands of smart contracts for re-entrancy bugs or integer overflow vulnerabilities in hours, a process that previously took months of manual review.

The irony is that the same technology promising to “revolutionize” DeFi is tearing it down. Proponents argue that AI can automate compliance and secure code, yet the NIST AI 100-2 report on adversarial machine learning highlights that models are inherently susceptible to data poisoning and model inversion attacks. When an AI security model is trained on historical exploit data, attackers can generate novel attack vectors that fall outside the training distribution, rendering the model blind to the threat. This is the “black box” problem: we do not know how the AI reaches its conclusions, and we cannot verify if it has been tricked.

The Qureshi Caveat: Why AI Wallets Are a Ticking Time Bomb

The industry’s push toward autonomous AI agents managing user funds is a reckless gamble with customer capital. Haseeb Qureshi, Managing Partner at Dragonfly, warns about user safety concerns in crypto transactions and suggests AI-operated wallets could soon interact with contracts directly. While this promises frictionless DeFi, it introduces a catastrophic single point of failure where a prompt injection attack could drain a wallet instantly.

Qureshi’s skepticism is grounded in the reality of large language models (LLMs). These models operate on probabilistic completion, not deterministic logic. An AI wallet might interpret a malicious smart contract as a legitimate yield farming opportunity because the contract’s code mimics the structure of verified protocols. The “context window” of the model might be filled with misleading data provided by the attacker, effectively overriding the system prompt designed to protect the user.

The financial incentives are misaligned. Venture capitalists are pouring billions into “DeFAI” (Decentralized Finance AI) protocols, rushing products to market to capture the estimated $40.8 billion AI crypto trading software bot market. This speed-to-market mentality ignores the rigorous validation required for systems that handle irreversible transactions. If an AI wallet signs a transaction approving an infinite spend allowance, there is no “undo” button on the blockchain. The user is not just facing a bug; they are facing a total loss of funds due to a statistical hallucination.

Black Box Breakdown: Why Validation Failures Turn AI into a Hacker’s Playground

The failure of general-purpose AI models in crypto security is starkly illustrated by recent comparative studies. A February 2026 study by AI security firm Cecuro analyzed 90 smart contracts exploited between late 2024 and early 2026, representing approximately $228 million in total losses. Cecuro’s purpose-built AI security agent successfully detected 92% of real-world DeFi exploits tested. In contrast, general-purpose AI models like GPT-5.1 only identified 34% of the same vulnerabilities.

This 58-point performance gap exposes the myth that “more parameters” equals “better security.” General models are trained on vast datasets of internet text, diluting their ability to recognize the specific, rigid syntax of Solidity or Rust. They are excellent at generating marketing copy for crypto projects but terrible at identifying a re-entrancy vector in a complex DeFi protocol. Relying on a generalist chatbot to audit a smart contract is akin to asking a general practitioner to perform neurosurgery; the domain knowledge is simply missing.

The NIST AI 100-2 taxonomy categorizes these failures under “Evasion Attacks,” where malicious inputs are crafted to cause misclassification. In the context of the Bybit hack, the AI likely generated transaction patterns that evaded the exchange’s anomaly detection models. The exchange’s security AI was trained on “normal” transaction data, so the attacker’s AI generated “slightly abnormal” data that stayed within the decision boundary. This is a mathematical inevitability in high-dimensional spaces; there are always ways to perturb an input to fool a classifier.

The Silent Crypto Revolution: Regulatory Scrutiny is the Only Path Forward

The technological arms race cannot be solved by code alone; it requires a regulatory framework that mandates liability for algorithmic failures. Michael Selig, CFTC Chairman, stated that establishing a clear regulatory framework for innovators building on the new frontier of finance can foster responsible innovation and ensure American market participants are not left on the sidelines. Without clear guidelines, exchanges will continue to treat security as a cost center rather than a survival mandate.

The industry is already seeing fragmented attempts at self-regulation, such as Justin Sun’s recent initiative to launch an AI system to hunt crypto fraud suspects with a $100 million reward pool. While this sounds proactive, it is a privatized band-aid on a systemic wound. Relying on wealthy individuals to fund bounty programs is not a sustainable security policy. It creates a landscape where only the richest protocols can afford protection, leaving smaller projects as easy prey for AI-driven exploits.

The NIST CSWP on Adversarial Machine Learning outlines technical mitigations, such as “robustness training” and “input sanitization,” but these require standardization. Currently, every exchange uses a different proprietary stack, making it impossible to establish industry-wide best practices. The CFTC and SEC must step in to define what constitutes “adequate AI security” for custodians. This includes mandatory “red teaming” exercises where independent AI agents are hired to attack the platform’s defenses, similar to stress tests in traditional banking.

The Bottom Line

The $1.4 billion Bybit heist is not an anomaly; it is the harbinger of a new era of financial warfare where algorithms fight algorithms. The crypto ecosystem is currently trapped in a “myth of competence,” believing that smart contracts are secure simply because they are on a blockchain. The reality is that the code is only as strong as the AI auditing it, and current general-purpose models are woefully inadequate for the task.

Hope is not a strategy. Exchanges and DeFi protocols must immediately pivot from general-purpose AI tools to specialized, adversarially-trained security models. The 92% detection rate achieved by Cecuro proves that specialized AI works, but it requires investment and expertise that most projects currently lack. Furthermore, the industry must accept that AI wallets, in their current form, are a danger to retail investors. The risk of a prompt injection draining a life savings is too high to ignore.

The verdict is clear: The risk level is High. The convergence of unchecked AI development and irreversible blockchain transactions has created a systemic hazard. Until regulatory bodies like the CFTC enforce strict standards for algorithmic security and exchanges abandon their reliance on “magic bullet” general AI, the sector remains a ticking time bomb. The next $1 billion hack is already being planned by an AI on a GPU cluster somewhere, and unless the defense catches up, the victims will be the users who were promised safety.

Methodology and Sources

Related Articles

[!CAUTION] Risk Warning & Disclaimer: The content provided is strictly for educational and informational purposes. It does not constitute financial, legal, or investment advice. Trade at your own risk and consult a certified professional.